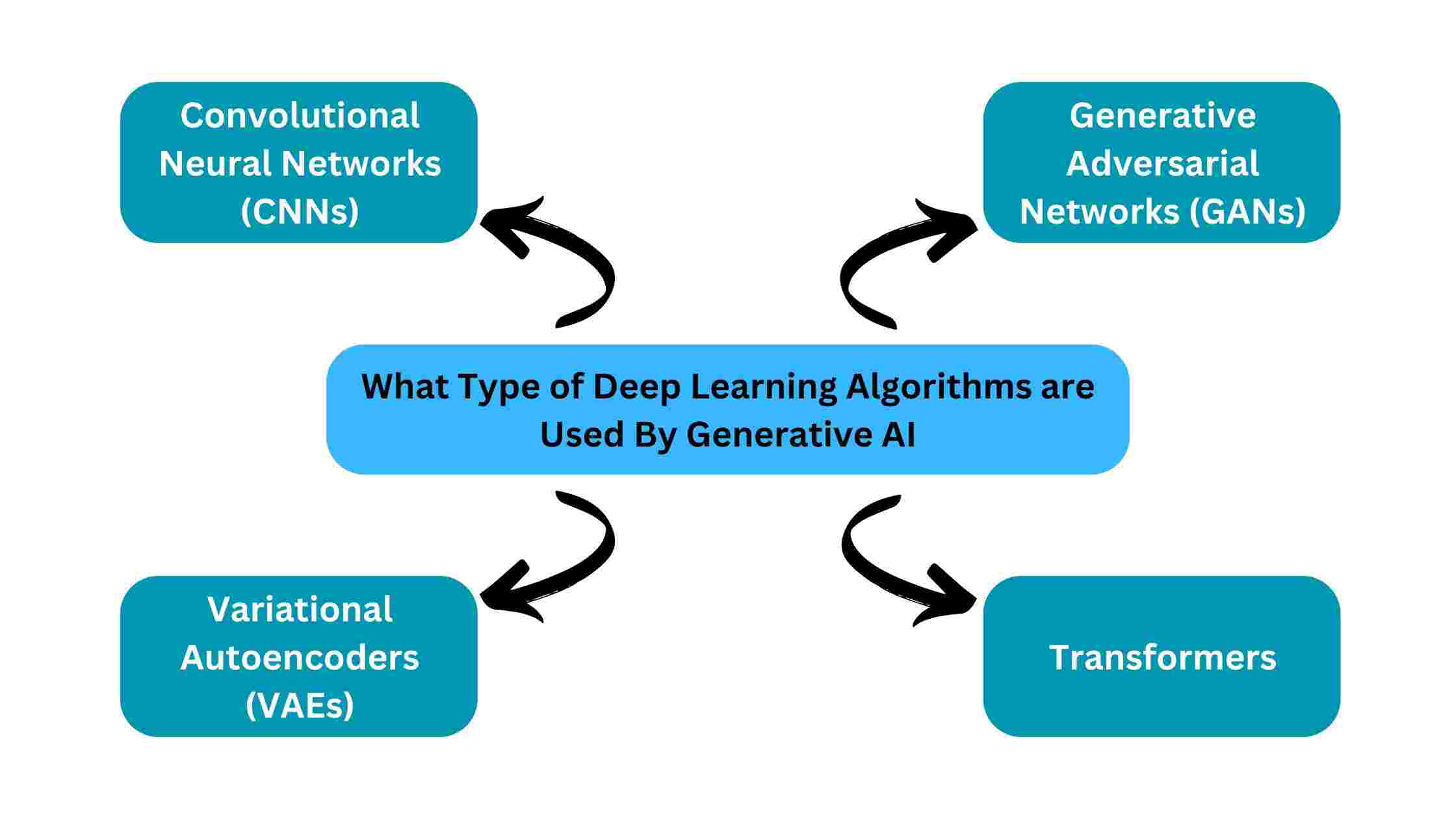

Generative AI is one of the hottest topics in technology today. The key to generative AI is deep learning algorithms. But what type of deep learning algorithms are used by generative AI?

Convolutional Neural Networks (CNNs), Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), and Transformers are the types of deep learning algorithms used by generative AI.

This blog post will explore the major deep learning models powering today’s generative AI systems. Let’s get started!

Table of Contents

- Type 1: Convolutional Neural Networks (CNNs)

- Type 2: Variational Autoencoders (VAEs)

- Type 3: Generative Adversarial Networks (GANs)

- Type 4: Transformers

- Conclusion

Type 1: Convolutional Neural Networks (CNNs)

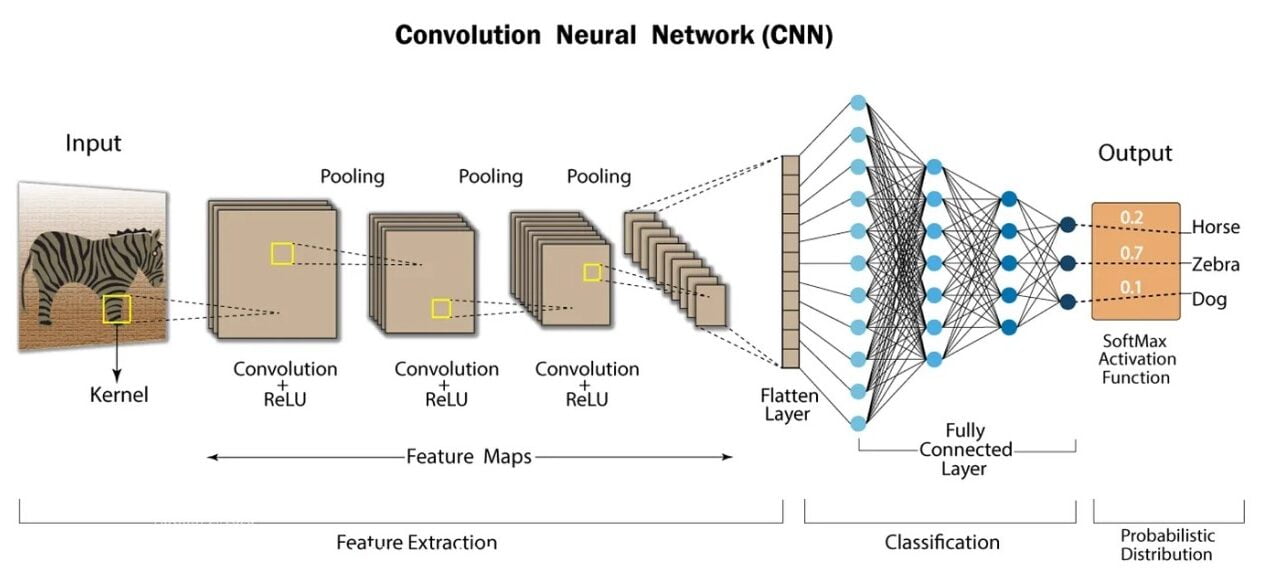

One of the key deep learning algorithms used by generative AI systems is the convolutional neural network or CNN. CNNs are a specialized type of neural network that excels at analyzing visual data like images and videos.

How CNNs Work

CNNs take an input image and break it down into many small overlapping tiles called receptive fields. Each receptive field connects to a neuron in the CNN which looks for a specific pattern or feature.

As CNN analyzes the image tile by tile, it builds up an understanding of the full image by combining the patterns detected.

CNNs for Image Generation

While CNNs were originally developed for image classification tasks, they also play a crucial role in generative AI for image generation.

Generative adversarial networks (GANs), which we’ll cover later, often use CNNs as the generator model to produce new synthetic images.

Examples of CNNs

Some examples of how CNNs enable image generation include:

- Creating photorealistic faces of fictional people

- Rendering high-resolution images from low-res inputs

- Translating text descriptions into visuals

- Filling in missing sections of an image

The convolutional architecture of CNNs, which scans for patterns across an image, makes them well-suited for generative modeling of visual data.

As generative AI continues advancing, expect to see CNNs at the forefront of image synthesis.

Type 2: Variational Autoencoders (VAEs)

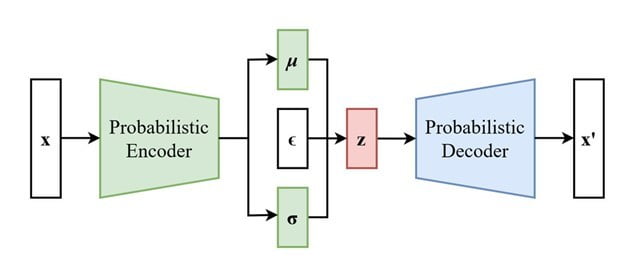

Another type of deep learning algorithm used by generative AI systems are variational autoencoders or VAEs. VAEs are a form of unsupervised learning that can automatically discover patterns in data like images, audio, or text.

How VAEs Work

A VAE has two main components – an encoder and a decoder. The encoder compresses the input data into a dense “encoded” representation.

The decoder then tries to reconstruct the original data from just the encoded representation.

During training, the VAE learns to encode data in a way that captures the most important features.

It also learns patterns in the encoded space that allow the generation of new data samples.

VAEs for Generative Modeling

What makes VAEs useful for generative AI is their ability to model complex, high-dimensional data distributions.

By mapping data to an encoded space, VAEs can discover the underlying patterns and distributions that characterize the data.

Applications of VAEs in Generative AI

Applications of VAEs in generative AI include:

- Generating photorealistic images and animations

- Creating new music, speech, and other audio samples

- Text generation tasks like machine translation

- Generating molecular structures for drug discovery

The unsupervised nature of VAEs allows them to extract meaningful representations from data in a self-guided way.

This maps well to the core goal of generative AI algorithms – creating new data by understanding and modeling the true data distribution.

Type 3: Generative Adversarial Networks (GANs)

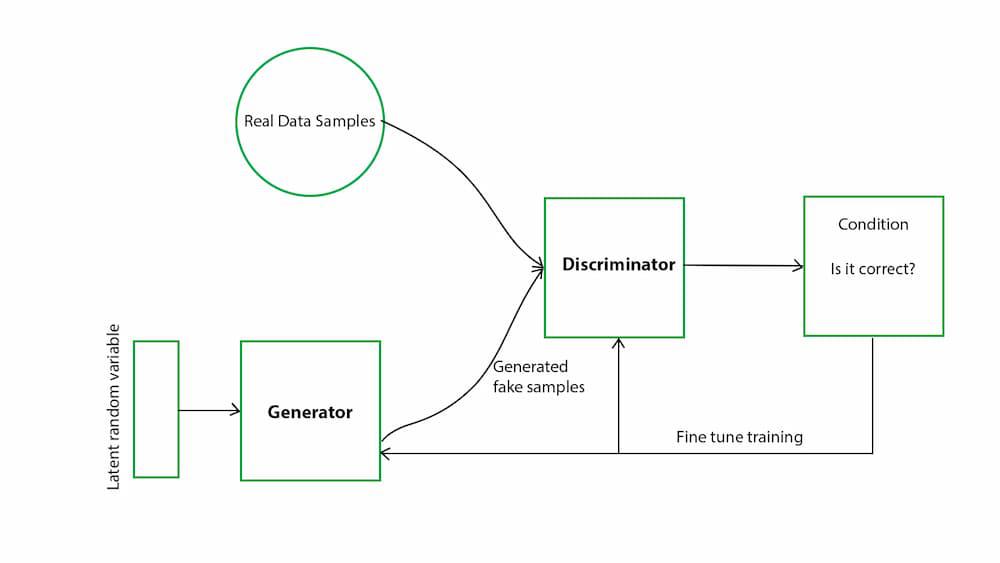

One of the most significant deep learning algorithms used by generative AI systems is Generative Adversarial Networks or GANs.

GANs have shown remarkable progress in generating highly realistic synthetic data across different domains.

How GANs Work

A GAN is comprised of two neural networks – a generator and a discriminator – that are trained together in an adversarial process.

The generator creates new data samples, while the discriminator tries to identify whether each sample is real (from the training data) or fake (created by the generator).

Over many training iterations, the generator learns to produce increasingly realistic data to fool the discriminator.

Simultaneously, the discriminator continually improves at distinguishing real from fake data. This back-and-forth competition drives both networks to improve their capabilities.

GANs for Generative Modeling

The adversarial training process of GANs equips them with an exceptional ability to capture the underlying probability distribution of real data.

This makes GANs one of the most powerful generative AI algorithms for a wide variety of applications:

- Photorealistic image generation and image-to-image translation

- Creating deep fake videos and audio samples

- Procedural generation of 3D objects and environments

- Text generation tasks like dialogue, stories, and lyrics

While GANs can be notoriously difficult to train, their unique adversarial approach allows them to produce sharper, higher-quality samples compared to other generative models.

This has made GANs the algorithm of choice for many generative AI use cases.

Type 4: Transformers

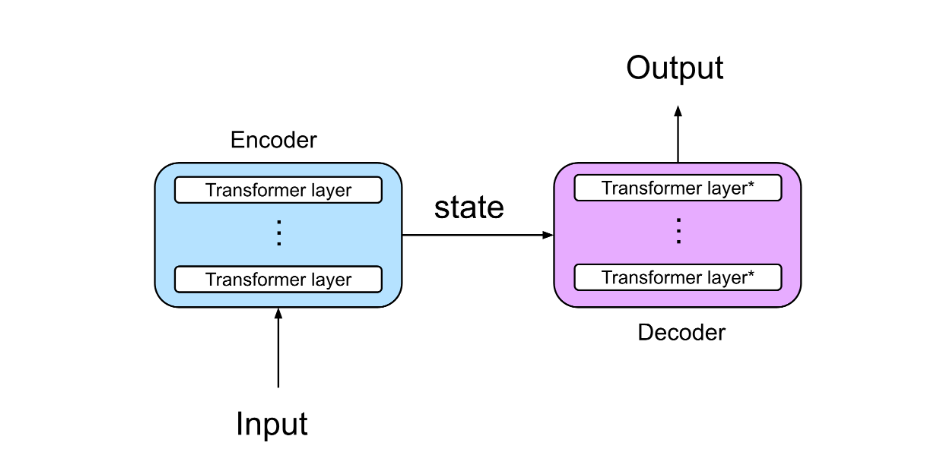

While convolutional neural networks and generative adversarial networks are powerful for generating visual data like images and videos, transformers have emerged as the leading deep learning algorithm for the generative modeling of text and language data.

What are Transformers?

Transformers are a type of neural network architecture that utilizes an attention mechanism to learn contextual relationships between different parts of the input data.

This allows them to model sequential data like text, audio, or time series in a highly effective way.

The key innovation behind transformers is self-attention – each part of the input data gets weighted connections to other relevant parts, allowing the capturing of long-range dependencies.

This enables transformers to understand and generate coherent sequences better than previous models.

Transformers for Text Generation

The most well-known examples of generative AI models using transformers are large language models like GPT-3 (Generative Pre-trained Transformer 3).

These models are first pre-trained on vast datasets to build broad knowledge, then can be further fine-tuned for specific text generation tasks.

Applications of Transformers in Generative AI

Some key applications of transformers in generative AI include:

- Open-ended text generation for storytelling, dialog, essays, etc.

- Code/program generation from natural language descriptions

- Language translation between different languages

- Question-answering and information extraction

Multimodal models like DALL-E that combine text and images also leverage transformers for the language understanding component. As generative AI continues advancing, self-attention and transformer architecture will likely play an increasingly vital role across modalities.

Conclusion

In conclusion, what type of deep learning algorithms are used by generative AI systems primarily include convolutional neural networks, variational autoencoders, generative adversarial networks, and transformers. As we’ve seen, each of these algorithms brings unique strengths to generative modeling across different data types and domains. With rapid innovation in this space, the deep learning algorithms utilized by generative AI will likely continue expanding in exciting new directions.

Ajay Rathod loves talking about artificial intelligence (AI). He thinks AI is super cool and wants everyone to understand it better. Ajay has been working with computers for a long time and knows a lot about AI. He wants to share his knowledge with you so you can learn too!

Introduction: In recent years, the alarming rate of diabetes has become a global concern, necessitating

the need for effective and reliable measures to manage Stimula Blood Sugar Supplement sugar levels.

The prevalence of blood sugar-related disorders, such as diabetes, has been on the rise globally.

Managing healthy Stimula Blood Sugar Supplement sugar levels is crucial for overall

well-being.

Maintaining stable Stimula Blood Sugar Ingredients

sugar levels is crucial for overall health and well-being.

Fluctuations in blood sugar levels can lead to various complications, including diabetes, cardiovascular diseases, and obesity.

This is some awesome thinking. Would you be interested to learn more? Come to see my website at Article Star for content about Social Media Marketing.