Generative AI is an exciting technology that can create human-like text, images, and more. Tools like ChatGPT and DALL-E are making generative AI popular. But with great power comes great responsibility.

The responsibility of developers using generative AI includes understanding limitations, mitigating bias, ensuring data security, and educating users about the technology’s capabilities and potential risks.

In this blog, we will explore what is generative AI, how it works and what is the responsibility of developers using generative AI. Let’s get started!

Table of Contents

- Understanding Generative AI

- Responsibility of Developers Using Generative AI

- Building a Better Future with Generative AI

- Conclusion

Understanding Generative AI

Generative AI is a type of artificial intelligence that can create new content. This could be text, images, audio, or even computer code. Generative AI works by learning from a huge amount of existing data. It then uses that knowledge to generate brand-new content that seems human-made.

Some popular generative AI tools are ChatGPT for text, DALL-E for images, and GitHub Copilot for code. These tools are becoming very powerful and useful. But they also raise some important questions.

That’s why developers using generative AI need to be very careful and responsible. We’ll cover the responsibility of developers while using generative AI.

Responsibility of Developers Using Generative AI

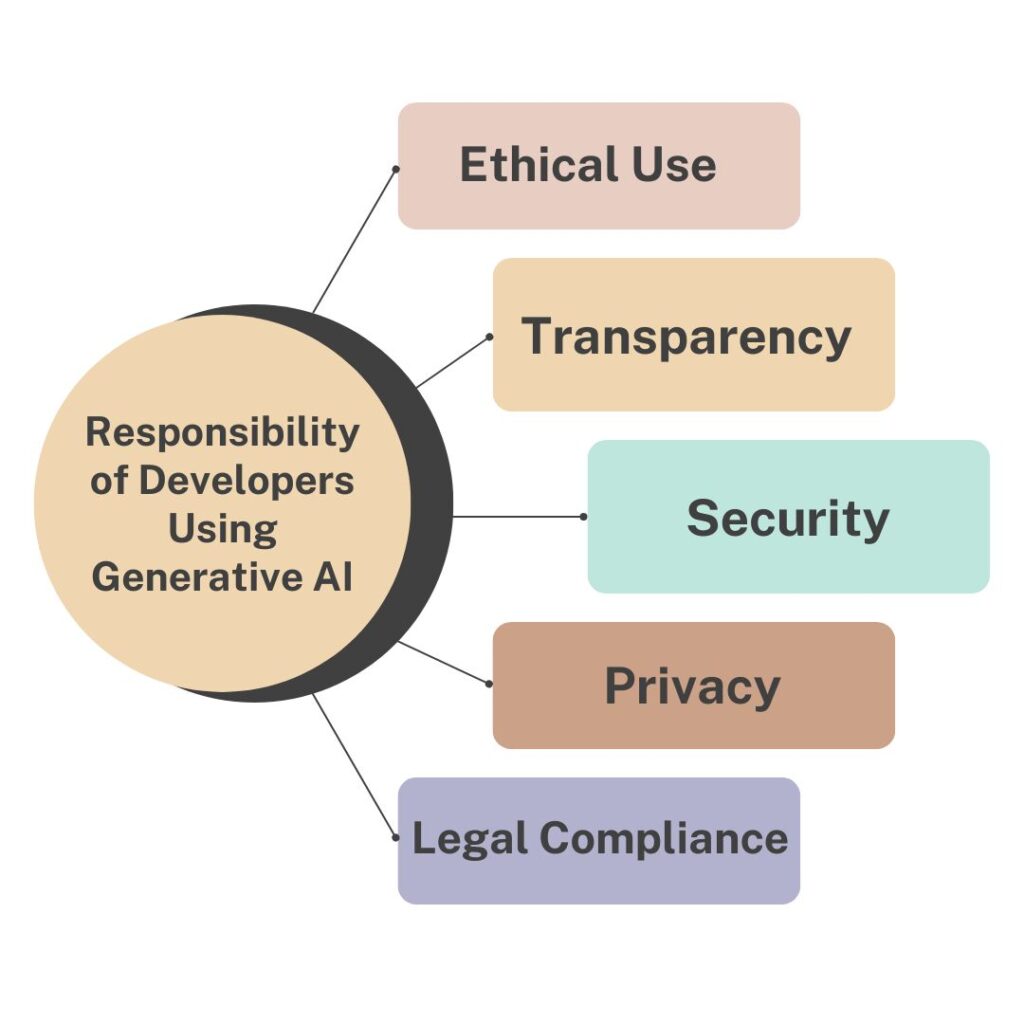

As generative AI becomes more widespread, developers who use this technology have some important responsibilities. They need to be mindful of several key areas:

Ethical Use

Developers must ensure their use of generative AI is ethical and doesn’t cause harm. This means watching out for biases in the training data and outputs. It also means not creating misinformation, hate speech, or other harmful content.

Transparency

When using generative AI, developers should be upfront about it. They need to properly cite sources and disclaim AI-generated content. This transparency helps build trust with users.

Security

Generative AI models could be exploited for malicious purposes like spreading fake news or hacking. Developers must implement robust security measures to prevent misuse.

Privacy

Training generative AI on personal data raises privacy concerns. Developers should protect user privacy and follow data protection regulations.

Legal Compliance

There are laws and guidelines around AI use that developers must follow. This includes respecting intellectual property rights.

These responsibilities aren’t simple, but they’re crucial as generative AI grows more powerful. Developers pioneering this tech have an obligation to wield it responsibly.

Building a Better Future with Generative AI

Generative AI has immense potential to revolutionize various industries and aspects of our lives. However, we must be cautious and use this powerful technology responsibly to build a better future.

If developers collaborate and adhere to ethical principles, we can harness the full potential of generative AI while mitigating its risks.

Key responsibilities for developers to build a better future with generative AI include:

1. Prioritizing Ethics

- Thoroughly testing AI systems to identify and remove biases and inaccuracies

- Ensuring generative AI does not discriminate or promote harmful content

2. Maintaining Transparency

- Clearly disclosing when content is AI-generated

- Properly citing sources to build trust with users

3. Protecting Privacy and Security

- Adhering to data privacy laws and regulations

- Implementing robust security measures to prevent misuse or exploitation

4. Fostering Collaboration and Knowledge Sharing

- Developing best practices and guidelines for responsible AI use

- Sharing insights and lessons learned within the developer community

As generative AI continues to evolve, new challenges will inevitably arise. However, by working together, developers can address these challenges collectively and adapt to the changing landscape of this transformative technology.

Conclusion

In conclusion, as generative AI becomes more powerful, it’s crucial for developers to understand what is the responsibility of developers using generative AI. They must prioritize ethics, transparency, privacy, security, and collaboration. By taking these responsibilities seriously, developers can harness generative AI’s potential while preventing misuse and harm. Responsible practices will shape a better future with this transformative technology.

Ajay Rathod loves talking about artificial intelligence (AI). He thinks AI is super cool and wants everyone to understand it better. Ajay has been working with computers for a long time and knows a lot about AI. He wants to share his knowledge with you so you can learn too!

2 thoughts on “What is the Responsibility of Developers Using Generative AI”