Artificial Intelligence (AI) is changing the way machines learn and solve problems. One fascinating technique in AI is Explanation Based Learning. But what exactly is it?

Explanation Based Learning in Artificial Intelligence is a method where AI systems learn by understanding the reasons behind examples, not just memorizing them. It’s like teaching a computer to think, not just remember.

In this blog, we’ll explore how this clever AI technique works. We’ll look at why it’s important, how it works, its components, and where it’s used in the real world.

Table of Contents

- Understanding Explanation Based Learning in Artificial Intelligence?

- The Role of Explanation Based Learning in Artificial Intelligence Systems

- How Does Explanation Based Learning Work in AI?

- Real-world Applications of Explanation Based Learning in Artificial Intelligence

- Key Components of EBL in Artificial Intelligence

- Challenges and Limitations of EBL in AI

- The Future of Explanation Based Learning in Artificial Intelligence

- Conclusion

Understanding Explanation Based Learning in Artificial Intelligence?

Explanation Based Learning (EBL) in AI is a smart way for machines to learn. Unlike other AI methods that need lots of examples, EBL focuses on understanding why something works.

Imagine teaching a child. You could show them many pictures of dogs, or you could explain what makes a dog a dog. EBL in AI works like the second approach. It learns the rules, not just the examples.

In EBL, an AI system looks at a few examples and tries to figure out the underlying principles. It uses what it already knows about the world to make sense of new information. This helps the AI learn faster and apply its knowledge to new situations.

The Role of Explanation Based Learning in Artificial Intelligence Systems

Explanation Based Learning plays a crucial role in making AI systems smarter and more efficient. Let’s break down its importance:

1. Enhancing AI’s Understanding

- Explanation Based Learning helps AI grasp the ‘why’ behind information

- It moves AI from simple pattern recognition to deeper comprehension

2. Improving Efficiency

- AI learns from fewer examples

- Saves time and computational resources

3. Better Problem-Solving

- AI can tackle new, unseen problems

- Uses learned principles to find solutions creatively

4. Making AI More Human-like → Mimics human learning by focusing on explanations → Leads to more natural and intuitive AI behavior

5. Bridging the Gap Between Data and Knowledge

- Turns raw data into meaningful, usable knowledge

- Helps AI build a more structured understanding of its domain

By incorporating Explanation Based Learning, AI systems become more than just data processors. They transform into intelligent entities capable of reasoning and applying knowledge in diverse situations.

This approach brings us one step closer to creating truly smart and adaptable artificial intelligence.

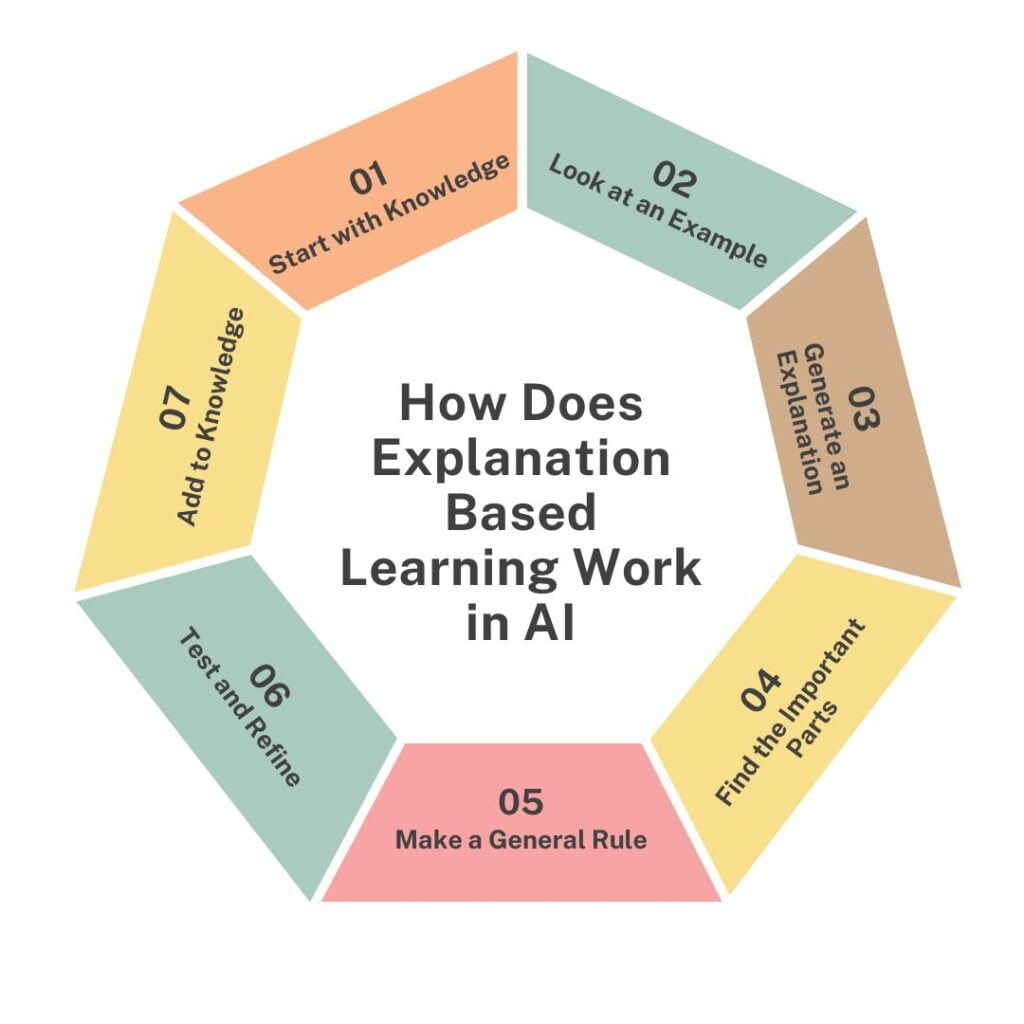

How Does Explanation Based Learning Work in AI?

Explanation Based Learning in AI works through a series of steps that help the system understand and learn from examples. Let’s break it down in simple terms:

1. Start with Knowledge

The AI system begins with some basic knowledge about the world or the specific area it’s learning about.

2. Look at an Example

The AI is given a specific example to learn from. This could be a problem and its solution, or a situation and its outcome.

3. Generate an Explanation

Using its existing knowledge, the AI tries to explain why the example works the way it does. It’s like figuring out the logic behind a puzzle.

4. Find the Important Parts

The AI identifies which parts of the explanation are crucial for solving the problem or understanding the situation.

5. Make a General Rule

Based on what it learned from the example, the AI creates a broader rule that can apply to similar situations.

6. Test and Refine

The AI might try its new rule on other examples to make sure it works well. If needed, it adjusts the rule to make it better.

7. Add to Knowledge

Finally, the AI adds this new rule to its knowledge base, ready to use it in future problems.

This process helps AI learn more efficiently and deeply. Instead of just remembering lots of examples, it learns to understand and explain them, making its knowledge more useful and flexible.

Real-world Applications of Explanation Based Learning in Artificial Intelligence

Explanation Based Learning in AI isn’t just a cool idea – it’s being used in many real-world applications. Here are some exciting ways it’s making a difference:

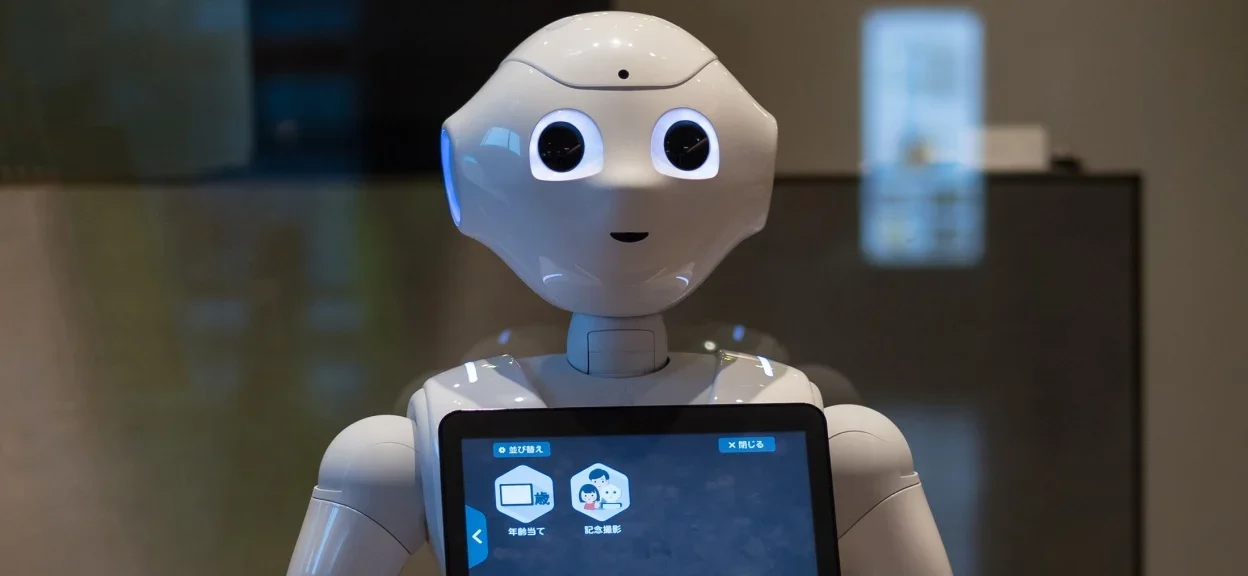

Example 1: Smart Robots

AI-powered robots use EBL to learn new tasks faster. They don’t just copy movements they understand why certain actions work. This helps them adapt to new situations quickly.

Example 2: Improved Customer Service

AI chatbots with EBL can better understand customer issues. They learn from each interaction, figuring out the reasons behind common problems. This lets them provide more helpful and accurate responses over time.

Example 3: Medical Diagnosis

In healthcare, EBL helps AI systems learn from complex medical cases. By understanding the reasoning behind diagnoses, these systems can assist doctors with more accurate and explainable recommendations.

Example 4: Smarter Self-Driving Cars

EBL allows self-driving cars to make better decisions. They learn not just the rules of the road, but why those rules exist. This helps them handle unexpected situations more safely.

Example 5: Enhanced Language Translation

AI translators using EBL can grasp the context and nuances of languages better. They learn the reasons behind language structures, leading to more natural and accurate translations.

These examples show how Explanation Based Learning is making AI more useful and reliable in our everyday lives. It’s helping machines think more like us, making them better at solving real-world problems.

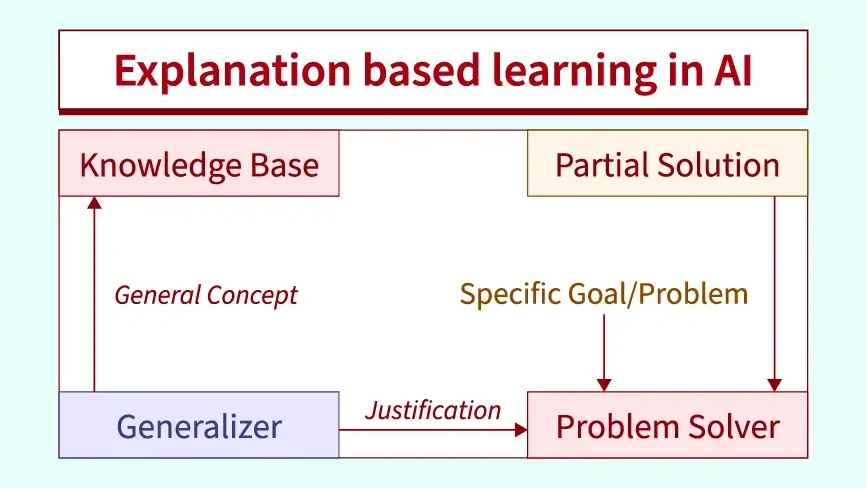

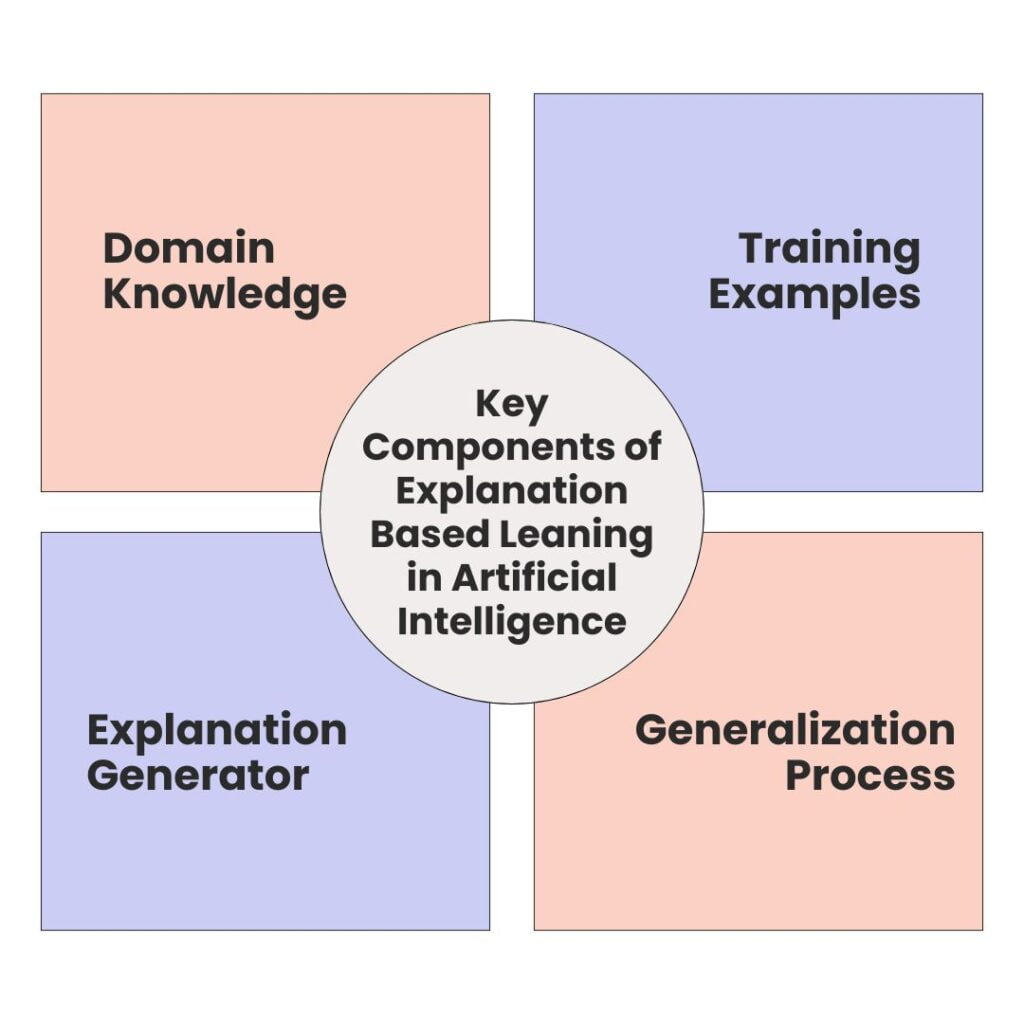

Key Components of EBL in Artificial Intelligence

Explanation Based Learning in AI has four main parts that work together:

Domain Knowledge

This is what the AI already knows about a topic. It’s like the basic facts and rules the AI starts with. For example, if the AI is learning about cars, it might know that cars have wheels and engines.

Training Examples

These are specific cases the AI looks at to learn from. They’re real situations or problems that show how things work. Using our car example, a training example might be how a particular car model performs on different roads.

Explanation Generator

This part of the AI figures out why things happen. It uses the domain knowledge to explain the training examples. It’s like the AI’s way of saying, “Oh, I see why this car works well on bumpy roads – it has good suspension!”

Generalization Process

Here, the AI takes what it learned from the specific example and makes a broader rule. It decides which parts of the explanation are most important and can apply to other situations. So, it might be concluded that cars with good suspension generally perform better on rough terrain.

These four parts help the AI not just remember facts, but understand and use them. It’s what makes Explanation Based Learning in AI so powerful for solving new problems and adapting to different situations.

Challenges and Limitations of EBL in AI

While Explanation Based Learning in AI is powerful, it has some drawbacks. One big issue is that it heavily relies on having good starting knowledge. If the AI’s initial information is wrong, its explanations and learning will be off too.

Another problem is that EBL can sometimes make rules that are too broad. This means the AI might think its learning applies to more situations than it should.

EBL also struggles with very complex problems. When there are too many factors to consider, it gets hard for the AI to create clear explanations.

Lastly, EBL can be slow compared to other AI learning methods, especially when dealing with large amounts of data.

The Future of Explanation Based Learning in Artificial Intelligence

The future of Explanation Based Learning in AI looks bright. As AI keeps growing, EBL will likely play a bigger role. We might see AI that can explain its decisions better, making it more trustworthy.

EBL could team up with other AI methods, creating smarter systems that learn faster and more deeply.

In the coming years, EBL might help AI tackle more complex problems in fields like medicine, science, and engineering.

It could lead to AI that not only solves problems but also teaches humans new things. As EBL improves, we can see AI that thinks more like humans, understanding the ‘why’ behind everything it learns.

Conclusion

In conclusion, Explanation Based Learning in Artificial Intelligence is a powerful tool that’s changing how AI thinks and learns. It helps AI understand the ‘why’ behind information, not just the ‘what’. This makes AI smarter, more flexible, and better at solving new problems. As AI continues to grow, EBL will play a key role in creating more intelligent and human-like systems that can truly understand and explain their knowledge.

Ajay Rathod loves talking about artificial intelligence (AI). He thinks AI is super cool and wants everyone to understand it better. Ajay has been working with computers for a long time and knows a lot about AI. He wants to share his knowledge with you so you can learn too!